Data reconciliation finance: best practices when accuracy is non-negotiable

Published on :

April 6, 2026

Every finance team reconciles data. The question is not whether they do it, but when, how completely, and what they do with the results. A team that reconciles its bank accounts at month-end and stops there has a very different accuracy posture than a team that reconciles every transaction flow continuously, and the difference is visible not in normal operations, but the moment something matters.

A board presentation where two revenue figures don't align. An audit where the external auditor finds a discrepancy the finance team hadn't noticed. A due diligence process where the acquirer's analysis contradicts the company's own numbers. These are the moments when the gap between "we reconcile regularly" and "our financial data is accurate" becomes expensive.

Financial data reconciliation is the discipline of ensuring that the data in your financial systems accurately represents the same underlying reality, across systems, across time, and across the people who depend on those numbers to make decisions. This article maps the best practices that make reconciliation reliable enough to support those decisions when accuracy genuinely cannot be compromised.

Why financial data reconciliation is harder than it looks

The intuitive model of financial data reconciliation is simple: take the same number from two systems, confirm they match, move on. In practice, the reconciliation challenge is structural, and it compounds with every additional system, entity, and transaction type in the finance function's scope.

The multi-system problem

Modern finance operations run across a stack of systems that were never designed to hold identical versions of the same financial data. An ERP records transactions. A CRM records commercial commitments. A billing platform generates revenue events. A banking portal records actual cash movements. A payment processor holds settlement data. Each system captures a fragment of the financial reality, and none of them were built to stay synchronised with the others.

Data alignment across systems requires bridging the gaps between these fragmented records. When the ERP's accounts receivable balance doesn't match the bank's incoming payment records, the discrepancy is not in either system, it is in the space between them, where transactions in progress, timing differences, and classification inconsistencies accumulate undetected until someone deliberately compares the two.

The number of potential reconciliation points grows quadratically with the number of systems. An organisation with five financial systems has ten possible bilateral reconciliation pairs. With ten systems, that's 45 pairs. Most finance teams reconcile a small fraction of these systematically, the rest is left to periodic spot checks and the assumption that the ERP is the source of truth.

The timing problem

Financial data is not static. A transaction recorded in the ERP at 11:47 PM on the last day of the month may not appear in the bank statement until the following business day. A payment processed in the billing system on a Friday may settle in the bank account three days later. An accrual recorded at period-end is reversed at the beginning of the next period.

These timing differences are normal and expected, but they create reconciliation windows during which the same underlying transaction appears in different states in different systems. Without a reconciliation approach that understands and accounts for these windows, timing differences get flagged as discrepancies, inflate exception queues, and obscure the genuine discrepancies that need to be investigated.

The classification problem

The same transaction can be classified differently across systems by design. A supplier payment recorded as "accounts payable, supplier X" in the ERP may appear as a SEPA transfer to a specific IBAN in the bank statement, with no reference to the supplier name. A customer payment may appear as a Stripe settlement in the bank, aggregating multiple individual customer transactions that the ERP records separately.

Without a systematic data reconciliation logic layer that understands these classification mappings, cross-system reconciliation requires manual interpretation at every point, the analyst who knows that "STRP-BATCH-20241203" is the Stripe settlement that corresponds to the sum of twelve individual customer invoices recorded in the billing system.

This knowledge is invaluable. It is also fragile, person-dependent, and does not scale.

The 3 contexts where "accurate enough" is not accurate enough

Best practices for financial data reconciliation differ depending on the stakes attached to the accuracy requirement. Three contexts in the finance function carry accuracy requirements that are genuinely non-negotiable.

Board and investor reporting

When a company presents financial results to its board or external investors, the numbers have legal and reputational weight. A revenue figure that differs between the management report and the ERP ledger is not an acceptable variance, it is a governance failure. An ARR calculation that cannot be traced back to the underlying billing transactions is not a metric, it is an estimate.

For SaaS companies specifically, the gap between billing system data, CRM records, and accounting entries is a well-documented source of reporting inaccuracy. Revenue recognised in the billing platform may not match the accounting system's recognitions if timing, currency conversion, or contract modification logic differs between the two. Financial reconciliation between these systems is not an administrative task, it is the work that makes board-level numbers credible.

External audit and regulatory compliance

External auditors test financial data for accuracy by tracing sample transactions from source documents through to financial statement line items. When that trace hits a discrepancy, an invoice amount that doesn't match the GL entry, a cash receipt that doesn't reconcile to the bank statement, the auditor's response is to expand the sample, because one discrepancy is evidence that the population may not be reliable.

Audit-ready finance processes are not audit processes, they are the continuous data quality practices that ensure the finance function can respond to audit sampling without finding surprises that the team itself didn't know about. The organisation that discovers reconciliation discrepancies during an audit is in a fundamentally different position from the organisation that can demonstrate continuous reconciliation with a complete audit trail.

Pre-payment financial controls

The third context is more operational but equally non-negotiable: the validation of financial data before payments are committed. An invoice approved for payment based on a price that doesn't match the contracted rate, or a settlement reconciliation that misses a duplicate, creates a financial loss that is real and immediate, not a reporting risk but a cash loss.

Pre-payment controls and pre-decision control are the reconciliation disciplines applied at the transaction level, validating that the data supporting a payment decision is accurate before the commitment is made, not after. This is where financial data accuracy translates most directly into financial outcomes.

5 best practices for financial data reconciliation when accuracy is non-negotiable

Practice 1 - Define a source of truth hierarchy for every data type

The most common cause of unresolvable reconciliation discrepancies is the absence of a defined answer to the question: when two systems report different values for the same thing, which one is right?

Source of truth validation requires establishing a clear hierarchy of authoritative systems for each data type in the finance function's scope. For transaction-level amounts: the bank statement is typically authoritative over the ERP, because the bank statement reflects actual cash movements rather than recorded transactions. For contracted prices: the contract management system is authoritative over the procurement system's PO, because POs may not accurately reflect the latest negotiated terms. For revenue metrics: the billing system's transaction records are authoritative over the CRM's opportunity values, because billing records are grounded in actual invoicing rather than sales pipeline estimates.

This hierarchy needs to be documented, communicated across the finance function, and embedded in the reconciliation tooling, so that when a discrepancy is identified, the resolution path is defined by the hierarchy rather than requiring a judgment call each time.

Practice 2 - Normalise data before reconciling, not after

One of the most consistent sources of false reconciliation discrepancies is format inconsistency between systems. The same value expressed differently in two systems, a date in DD/MM/YYYY format versus MM/DD/YYYY, a currency amount with a period versus a comma as the decimal separator, a supplier name in different case or abbreviation, will fail a comparison even when the underlying values are identical.

Data normalisation applied before reconciliation runs, converting all values to a consistent internal representation regardless of their source system format, eliminates this category of false discrepancy before it inflates exception queues. This is a pre-processing step, not a matching step: the goal is to ensure that the comparison operation the reconciliation engine performs operates on semantically equivalent inputs.

Intelligent data extraction and structured data preparation are the technical disciplines behind this practice. Phacet's data preparation agents apply normalisation at the point of ingestion, every document and transaction record is standardised before it enters the reconciliation workflow, ensuring that format differences between source systems don't masquerade as value differences.

Practice 3 - Reconcile continuously, not periodically

The periodic reconciliation model, reconcile at month-end, quarter-end, or when something looks wrong, creates a structural accuracy gap that compounds over time. In a system where reconciliation happens monthly, discrepancies can accumulate for up to 30 days before they are identified. By the time the reconciliation process runs, the transaction record may have aged to the point where investigation requires reconstructing the circumstances of the original transaction from memory and incomplete documentation.

Continuous finance control treats reconciliation as a permanent operating state rather than a periodic event. Every transaction is reconciled against its cross-system counterpart as it occurs, or within the settlement window appropriate to the transaction type. Discrepancies are identified while the underlying circumstances are recent, the documentation is complete, and the parties involved can be contacted directly.

This is not simply better reconciliation, it is a different accuracy posture. Continuous reconciliation means that the financial data in any system at any point in time reflects the best available version of the truth, not the best available version as of the last reconciliation cycle.

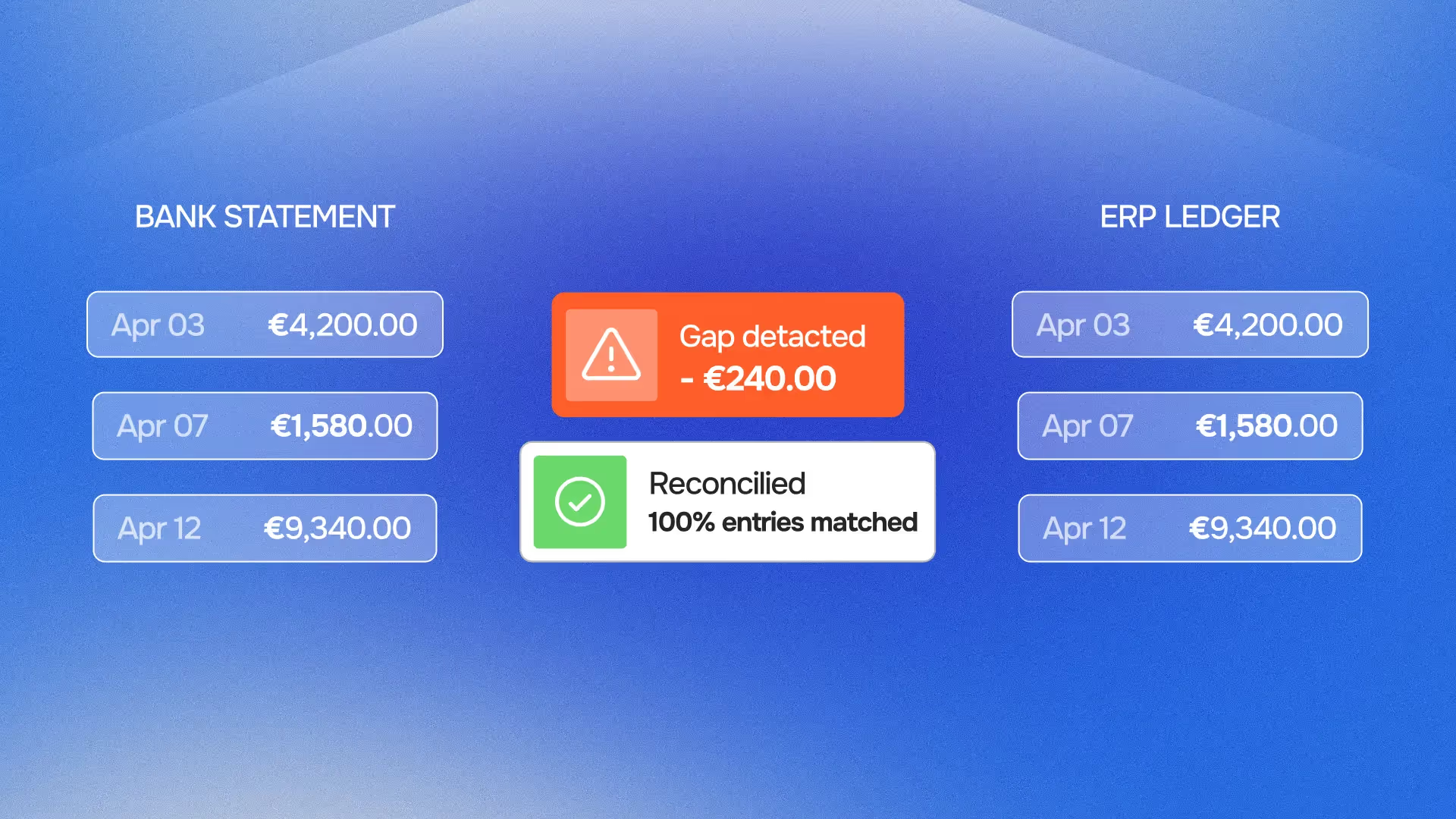

The bank reconciliation automation agent that Phacet deploys for treasury operations applies this logic to cash movement reconciliation: every incoming and outgoing bank transaction is compared to its ERP counterpart on a daily basis, and unmatched items are surfaced immediately rather than accumulated in an end-of-month backlog. The agent for reconciling bank transactions and detecting unmatched flows is the practical implementation of this continuous model.

Practice 4 - Separate discrepancy types and resolve each differently

Not all reconciliation discrepancies require the same response. Treating all unmatched items as equivalent exceptions is a significant source of resolution backlog and misallocated effort. Effective reconciliation distinguishes between at least three categories of discrepancy:

- Genuine data errors: the values in two systems differ because one of them is wrong, a transaction recorded at the incorrect amount, a classification applied inconsistently, a duplicate entry that should be removed. These require investigation and correction.

- Timing differences: the values in two systems differ because they represent the same transaction at different points in its lifecycle, a payment that has been recorded in the ERP but not yet settled in the bank. These require monitoring until the expected settlement event occurs, not immediate investigation.

- Classification differences: the values in two systems differ because the systems use different representations for the same economic event, a Stripe batch payment that needs to be disaggregated to match individual invoice records. These require mapping logic, not error correction.

Exception-based finance review that routes each discrepancy type to the appropriate resolution workflow, data correction, settlement monitoring, or classification mapping, handles reconciliation exceptions at their correct priority level rather than queuing all exceptions for the same human review process.

Phacet's reconciliation agents implement this categorisation automatically. Unmatched items are classified by discrepancy type, timing difference, classification gap, or genuine error, and routed to the appropriate workflow. Reviewers see only the discrepancies that require human judgment: the genuine errors and the unresolvable classification questions. Timing differences resolve automatically when the expected settlement events occur.

Practice 5 - Build reconciliation documentation into the process, not retrospectively

The audit trail for financial reconciliation is not a report generated at audit time. It is the continuous record of every comparison performed, every discrepancy identified, every resolution path taken, and every human decision made, generated in real time as the reconciliation process operates.

Organisations that construct audit documentation retrospectively, pulling together emails, spreadsheet logs, and ERP records to demonstrate that a particular reconciliation was performed and resolved correctly, are in a fundamentally weaker position than organisations whose reconciliation tooling generates this documentation automatically. Retrospective documentation can be incomplete, inconsistent, or impossible to construct for transactions that occurred months ago.

Explainable decision control, the principle that every automated reconciliation decision should be traceable to the specific inputs, logic, and thresholds that produced it, is the design requirement for reconciliation tooling that needs to be audit-defensible. Phacet's agents generate a complete, timestamped decision record for every transaction processed: the data values compared, the comparison result, the discrepancy category if applicable, and the resolution action taken. This documentation is available on demand, without reconstruction.

For the practical implications of building audit-ready processes in finance operations, see audit-ready finance processes and our analysis of AI financial data automation and end-to-end transparency.

Why periodic reconciliation fails the accuracy test

The periodic reconciliation model is a product of the constraints that existed before continuous automation was viable. Monthly closes were the practical frequency at which a finance team could manually reconcile its accounts. Exception investigation required analyst time that could only be allocated periodically. Reporting cycles were set to accommodate the time required to complete the reconciliation before numbers were published.

Those constraints no longer exist for organisations that have deployed automated reconciliation. But the periodic model persists, not because it is the right accuracy approach, but because it is the established one.

The accuracy failure of periodic reconciliation has three dimensions:

The accumulation problem.

In a monthly reconciliation cycle, 30 days of transactions accumulate before any comparison is made. A discrepancy introduced on day 2 of the month may be obscured by subsequent transactions that partially offset it. By day 30, the reconciliation workload is 15–20 times what it would be if transactions were reconciled daily, and the signal-to-noise ratio in the exception queue is correspondingly lower.

The staleness problem.

Financial data accuracy requires the ability to investigate and resolve discrepancies. Investigation quality degrades with time: the person who processed a transaction on day 2 may not remember the details by day 30. The supplier contact who could clarify a price variance may have changed. The documentation associated with a delivery may have been archived. Continuous reconciliation surfaces discrepancies while they are still fresh and resolvable.

The illusion problem.

A monthly reconciliation that closes with zero unresolved exceptions gives the impression of accuracy. But what it actually demonstrates is that the discrepancies visible at month-end have been resolved, not that the financial data is accurate throughout the month. Board reports, management decisions, and payment authorisations made during the month are based on unreconciled data. The reconciliation cycle validates the data retroactively; it does not make the data accurate in real time.

Data trustworthiness, the property that data can be relied upon for decisions at any point in time, not just at the end of a reconciliation cycle, requires continuous reconciliation. It cannot be achieved through periodic processes, regardless of how rigorously those processes are executed.

Reconciliation across the finance function: where the practices apply

The five best practices above apply across every reconciliation context in the finance function, but the specific implementation differs by context.

Bank reconciliation. The most mature reconciliation context in most organisations, but frequently still periodic. Continuous bank reconciliation applies the five practices to cash movement data: a defined hierarchy (bank statement is authoritative), normalisation of bank transaction descriptions to ERP reference formats, daily or real-time matching, automatic categorisation of timing differences versus genuine discrepancies, and a complete audit trail per transaction. The use case page for cash reconciliation covers the implementation specifics, and the cash flow automation article covers the downstream treasury benefits.

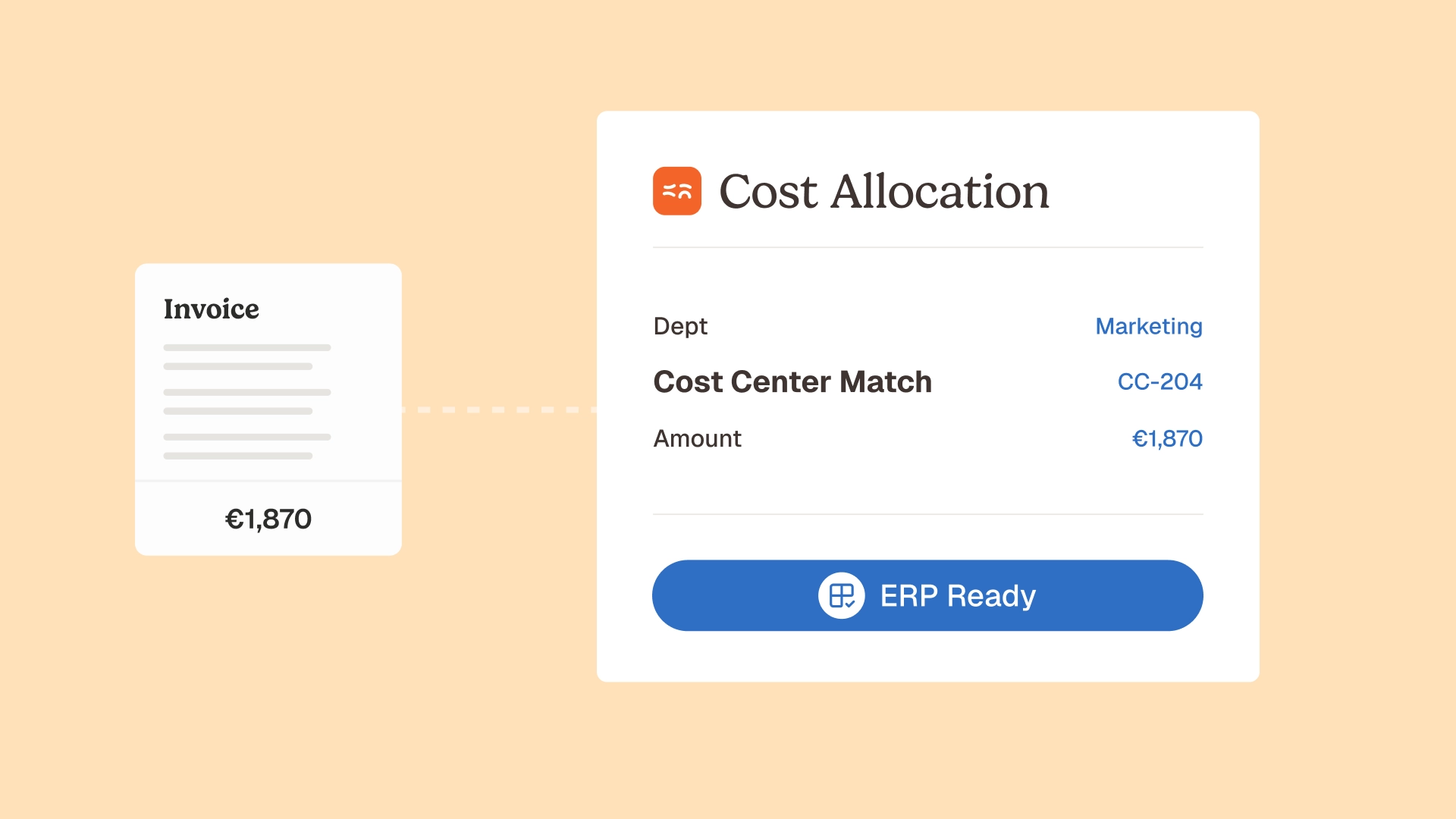

Invoice and AP reconciliation. Three-way matching of PO, delivery confirmation, and invoice is a reconciliation process, applying the same principles to the procurement cycle. The additional complexity here is the multi-document nature of the comparison and the contract-level reference data required for price compliance. Our article on why invoice matching fails at scale covers the data quality dimension in detail, and the 3-way matching agent implements continuous matching as part of the AP control stack.

Intercompany reconciliation. For multi-entity organisations, intercompany transactions create a reconciliation challenge that is structurally similar to bank reconciliation but involves two ERP instances rather than an ERP and a bank. The same transaction appears as a payable in one entity and a receivable in another, and the two records are frequently inconsistent in timing, amount, or classification. Continuous intercompany reconciliation applies the same five practices, with the complication that both sides of the comparison are internally controlled and therefore both correctable.

Revenue and billing reconciliation. SaaS and subscription businesses face a specific reconciliation challenge: the revenue metrics used for investor reporting (ARR, MRR, churn, expansion) are derived from billing transaction records, CRM data, and accounting entries that were created by different systems with different data models. Data alignment across systems for revenue reconciliation requires a reconciliation logic that understands the relationship between a CRM opportunity, a billing subscription, and an accounting revenue recognition event, and can identify when those three records describe conflicting versions of the same commercial relationship.

FAQ

What is financial data reconciliation and why does it matter?

Financial data reconciliation is the process of comparing financial records across systems, time periods, or data sources to confirm that they represent the same underlying economic reality accurately. It matters because finance functions operate across multiple systems, ERP, banking portals, billing platforms, CRM systems, that record transactions independently and in different formats. Without systematic reconciliation, discrepancies accumulate between these records, and financial reports, payment decisions, and audit evidence are based on data that may be internally inconsistent. The consequence ranges from reporting inaccuracies to genuine financial losses from undetected errors and fraud.

What is the difference between reconciliation and matching?

Matching is one type of reconciliation operation, comparing a specific field value in one record against the corresponding field value in another. Reconciliation is the broader process that encompasses matching, normalisation (ensuring fields are comparable before matching), discrepancy categorisation (determining what kind of difference was found), resolution (correcting errors, accepting timing differences, mapping classification gaps), and documentation (recording what was compared, what was found, and what was done about it). Matching without the surrounding reconciliation infrastructure produces match results; reconciliation with full infrastructure produces a financial accuracy posture.

What does "continuous reconciliation" mean in practice?

Continuous reconciliation means that every transaction is compared against its cross-system counterpart as it occurs, or within the settlement window appropriate to the transaction type, rather than accumulated in a batch for periodic comparison. In practice, this means that the finance function's reconciliation agents are running at all times, processing new transactions as they enter connected systems, comparing them against expected counterparts, and surfacing discrepancies immediately. The result is that the unreconciled transaction population at any given moment is limited to transactions that have occurred within the current settlement window, typically hours or days, rather than the entire interval since the last reconciliation cycle ran.

How do AI agents improve financial data reconciliation compared to manual or rule-based approaches?

Manual reconciliation is limited by human bandwidth, a team can reconcile a sample of transactions, not all of them, and exception investigation quality degrades with workload. Rule-based automated reconciliation is limited by the completeness of the rule library, it handles the cases the rules anticipated and creates exceptions for everything else. AI agents handle three additional capabilities: entity resolution (recognising that "STRP-BATCH-20241203" and "Stripe settlement batch #4721" are the same entity without an explicit rule mapping), pattern-based anomaly detection (identifying transactions that are technically matched but statistically anomalous given historical patterns), and classification learning (adapting reconciliation mappings based on how human reviewers have resolved similar discrepancies in the past). The practical result is higher match rates, lower exception volumes, and exception queues that contain genuine discrepancies rather than data quality noise.

What reconciliation documentation does an external auditor expect to see?

External auditors typically expect to see evidence of: which reconciliation procedures were performed, on which data populations, at what frequency; the specific discrepancies identified during each reconciliation run; how each discrepancy was categorised and investigated; and the resolution action taken for each. For automated reconciliation, this documentation should be system-generated, timestamped, and searchable, not reconstructed from memory or assembled from email threads. The audit trail that Phacet's reconciliation agents generate provides this documentation automatically: every comparison, every exception, every resolution, every human decision, all timestamped and linked to the underlying transaction record.

How do you prioritise which financial data to reconcile first?

Prioritisation should be driven by two factors: the financial materiality of errors in that data population, and the current gap between that population's reconciliation frequency and the frequency required by the accuracy standard. The highest-priority reconciliation investments are: cash and bank data (high materiality, directly impacts payment decisions), AP invoice data (high materiality, pre-payment errors are immediately costly), and revenue data used for investor reporting (high materiality, reporting inaccuracies have legal and reputational consequences). Lower-priority but still important: intercompany balances, expense categories, and accrual accuracy.

What is the ROI of investing in systematic financial data reconciliation?

The ROI of systematic financial data reconciliation comes from three sources. Direct error prevention: the transactions that are caught and corrected before they generate a financial loss, duplicate payments, price deviations, undetected fraud. Time savings: the hours that finance teams currently spend on manual reconciliation, exception investigation, and audit preparation, redirected to analysis and decision support. Audit and reporting credibility: the reduction in audit scope, the reduction in time-to-close, and the improvement in reporting cycle efficiency that come from having financial data that is continuously validated rather than periodically reconciled.

Accuracy is not a reconciliation outcome, it is a reconciliation posture

The organisations that achieve genuinely non-negotiable financial data accuracy share a common characteristic: they do not treat reconciliation as a periodic task that produces an accuracy snapshot. They treat it as a continuous operating discipline that maintains an accuracy posture, a state in which the data in their financial systems can be relied upon for any decision at any time, not just after the monthly close.

The five best practices, source of truth hierarchy, pre-reconciliation normalisation, continuous operation, discrepancy type separation, and real-time documentation,— are not a checklist for improving existing reconciliation processes. They are the architecture of a different accuracy model: one where the question "is our financial data accurate?" has a structural answer rather than a periodic one.

Phacet's reconciliation agents implement this architecture across the finance function's reconciliation domains: bank transaction reconciliation, invoice and AP matching, supplier transaction labelling, and cash flow classification. Each agent generates the decision-grade data that the finance function needs to report, pay, and control with confidence, not at the end of a reconciliation cycle, but continuously. Book a demo to see how this posture applies to your financial data environment.

Latest Resources

Unlock your AI potential

Go further with your financial workflows — with AI built around your needs.