Why invoice matching fails at scale, and how to make data reliable enough to validate

Published on :

March 30, 2026

Most organisations that invest in invoice matching software hit the same wall six to twelve months into deployment. Match rates start high, 85%, 90% in early testing, then gradually erode as invoice volume grows, supplier relationships multiply, and the edge cases that didn't appear in the pilot become the dominant pattern in production. Exception queues fill faster than reviewers can clear them. The automation that was supposed to reduce manual work ends up generating a different kind of manual work: manually correcting mismatches, chasing missing references, fixing data that the matching engine couldn't resolve.

The standard response is to tune the matching logic. Adjust the tolerance thresholds. Add more rules. Build more exceptions into the exception handler. The match rate improves, then erodes again.

The problem is not the matching logic. The problem is the data that the matching logic operates on.

The root cause: invoice matching is a data quality problem

Invoice matching software performs a comparison operation. It takes two or more documents, an invoice, a purchase order, a delivery confirmation, and determines whether corresponding fields are equivalent within defined tolerances. The matching logic can be sophisticated: fuzzy string comparison, ML-based entity resolution, multi-dimensional tolerance scoring. But regardless of how advanced the matching algorithm is, it can only work with the data it receives.

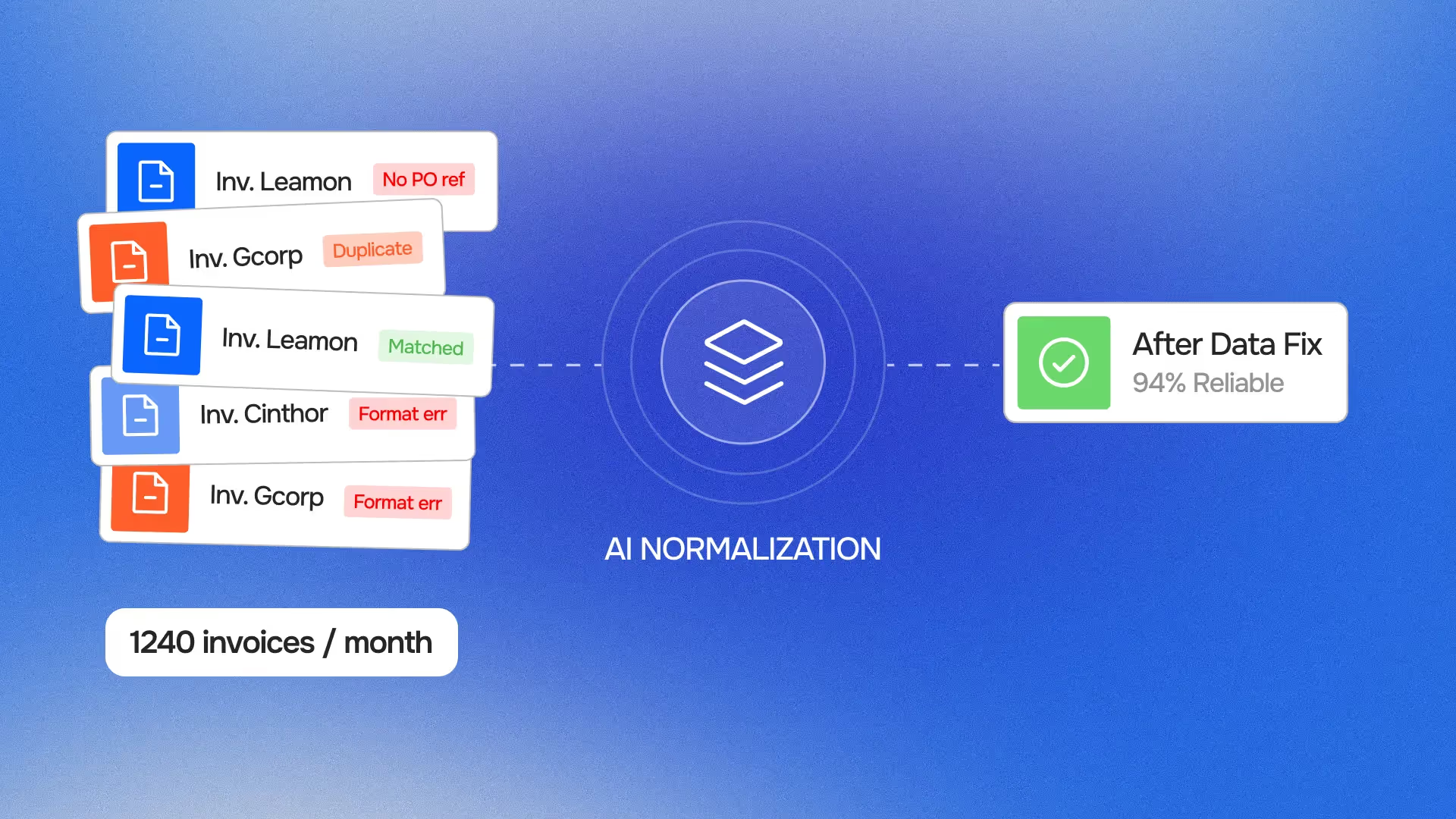

And the data that arrives at the matching engine, extracted from PDF invoices, pulled from ERP PO records, read from delivery system confirmations, is almost never clean enough at scale to match reliably without an upstream data preparation layer.

This is the gap that most invoice matching software implementations do not adequately address. They invest heavily in the matching algorithm and lightly in the data infrastructure that the algorithm requires. Data matching at any meaningful volume requires data normalisation and structured data extraction as prerequisites, not as nice-to-haves.

The 4 data quality failures that cause matching to break at scale

1. Extraction inconsistency: the same field read differently every time

Invoice matching starts with data extraction, reading the relevant fields from incoming invoice documents. For organisations processing invoices from dozens or hundreds of different suppliers, those documents arrive in dozens of different formats: different PDF layouts, different field positions, different label conventions, different precision levels for quantities and prices.

Standard OCR-based extraction approaches handle this by training template libraries, one extraction template per supplier format. This works until the supplier changes their invoice template, until a new supplier is onboarded whose format doesn't match any existing template, or until an invoice arrives from a known supplier but with a format variation (a different branch's letterhead, a legacy system's output, a modified layout from a billing platform update).

When extraction fails or produces inconsistent output, the matching engine receives malformed input. An invoice line that should read "1,000 units at €4.25" is extracted as "1.000 units at €4.25", a decimal separator interpretation error that converts 1,000 units to 1 unit and invalidates the match. A supplier name extracted as "Acme Corp" fails to match the ERP's registered "ACME Corporation Ltd". A date field extracted in DD/MM/YYYY format fails to match the PO's MM/DD/YYYY record.

None of these are matching logic failures. They are data extraction failures that manifest as matching failures. Fixing them at the matching layer by adding more exceptions is the wrong intervention, it treats the symptom rather than the cause.

Intelligent data extraction that applies validation and normalisation at the point of extraction, before data reaches the matching engine, solves this at source. Phacet's invoice data extraction layer checks field completeness, applies normalisation rules (decimal separators, date formats, currency codes, unit of measure standards), and flags extraction confidence scores that allow the matching engine to handle uncertain extractions differently from high-confidence ones.

2. Reference data inconsistency: the same entity described differently across systems

Invoice matching requires comparing entities across documents that were created by different parties in different systems. The supplier reference on an invoice is the supplier's own identifier for themselves. The supplier reference on the PO is your procurement system's identifier for that supplier. The supplier reference on the delivery confirmation is the warehouse system's identifier. All three should refer to the same entity, but they frequently don't resolve to the same string.

The same problem applies to item references. A supplier invoice line may reference a product by the supplier's own part number. The PO references it by your internal SKU. The delivery confirmation references it by the warehouse's bin location code. Three different identifiers for the same physical item, none of which match directly.

At low invoice volumes, these mismatches are resolved manually, an AP analyst recognises that "ACME Corp" on the invoice and "ACME Corporation Ltd" in the ERP are the same entity, and applies the manual override. At scale, the volume of these disambiguation decisions exceeds what manual resolution can handle, and the exception queue becomes dominated not by genuine discrepancies but by reference data inconsistencies that should never have reached the exception stage.

Data alignment across systems, maintaining a synchronised entity resolution layer that maps supplier identifiers, item references, and location codes across all connected systems, eliminates this category of false positives before matching runs. The matching engine receives normalised, aligned entity references rather than raw strings from disparate sources.

The source of truth validation principle matters here: when conflicting values appear across systems, there needs to be a defined hierarchy for which system's value is authoritative. Without this hierarchy, the matching engine has no basis for resolving conflicts, it can only flag them as exceptions and defer to human judgment.

3. Missing reference data: invoices that can't be matched because the match target doesn't exist

A matching engine can only match an invoice to a PO if the PO exists and is accessible. At scale, a significant fraction of invoices arrive without the reference data needed to locate the corresponding PO: no PO number on the invoice, a PO number that references a closed or superseded order, a PO that exists in a different ERP instance, or a PO that was created after the invoice was received.

These are not matching failures, they are upstream process failures that manifest as matching failures. The invoice cannot be matched because the match target is absent or inaccessible, not because the matching algorithm failed to find a valid correspondence.

The same pattern applies to delivery confirmations. An invoice matches a valid open PO, but no goods receipt has been recorded, the delivery is in transit, was received informally without system entry, or was recorded against the wrong PO line. The three-way match fails not because the transaction is wrong, but because the system of record for the delivery confirmation is incomplete.

Invoice matching software that handles this well distinguishes between three categories of non-match: genuine discrepancies (the invoice doesn't match the PO because the prices differ), structural absences (the invoice references a PO that doesn't exist in the accessible data), and process gaps (a PO and invoice exist but the delivery confirmation hasn't been recorded yet). Each requires a different response, and treating all three as the same exception type is a significant source of exception queue overload.

4. Temporal data drift: reference data that changes between PO creation and invoice receipt

Matching operates at a point in time, but the data it compares was created at different points in time. A purchase order was created three months ago. The delivery was confirmed six weeks ago. The invoice arrives today. In the interval between PO creation and invoice receipt, a supplier may have updated their payment terms, a price list revision may have taken effect, or an exchange rate fixing date may have passed.

When the matching engine compares the invoice's stated payment terms against the PO's recorded payment terms and finds a discrepancy, it is often not flagging an error, it is detecting a legitimate change that occurred between the two documents' creation dates and was never reconciled in the reference data.

At low volumes, these temporal discrepancies are resolved by AP analysts who know the supplier relationship well enough to recognise that the change is legitimate. At scale, no individual analyst has that context for all supplier relationships, and the matching engine has no mechanism to distinguish between "this changed legitimately" and "this is a genuine error."

This is where data trustworthiness infrastructure becomes critical: change tracking on reference data (when did this payment term change? who authorised it?), version-aware matching (compare the invoice against the PO's applicable terms at the time of the order, not the current master record), and supplier communication audit trails that document when commercial changes were agreed and by whom.

Why these failures compound non-linearly at scale

Each of the four data quality failures described above exists at low invoice volumes. An experienced AP team with 200 invoices per month can resolve them manually, they know their major suppliers well enough to recognise extraction quirks, they maintain informal entity resolution knowledge, and they have enough time per invoice to chase missing PO references.

At 2,000 invoices per month, the same four failure modes produce 10x the exception volume, but not 10x the team capacity to resolve them. The relationship between invoice volume and exception resolution effort is not linear because exceptions require contextual knowledge that doesn't scale with headcount. An AP analyst who joins the team at 2,000 invoices/month doesn't automatically inherit the institutional knowledge that the analyst who managed 200 invoices had built over two years.

This is why match rates that looked good in pilots degrade in production. The pilot ran on a representative sample of invoice volume, but not on the long tail of supplier relationships, format variations, and reference data inconsistencies that only appear when every invoice in the population is processed. The matching logic was validated against the common cases. The scale failures live in the edge cases, which make up an increasing proportion of the total as volume grows.

The financial reconciliation challenge at scale is not primarily a matching problem. It is a data infrastructure problem: how do you maintain the reference data quality, entity resolution consistency, and extraction reliability that matching requires, across a supplier population of hundreds rather than dozens, at a velocity of thousands of invoices per month rather than hundreds?

What decision-grade data means, and why matching requires it

The concept of decision-grade data is useful here. Decision-grade data is data that has been structured, normalised, validated, and aligned to the point where it can support automated decisions, not just automated comparisons.

Raw invoice data, as extracted directly from a PDF by OCR, is not decision-grade. It is a best-effort character recognition of a document format that was designed for human reading, not machine processing. Turning raw extracted data into decision-grade data requires:

- Structural normalisation: converting all quantity, price, date, and currency values to a consistent internal representation, regardless of how they appear in the source document

- Entity resolution: mapping supplier names, item references, and location codes to canonical identifiers that are consistent across all connected systems

- Completeness verification: confirming that all fields required for matching are present and populated, and routing incomplete invoices for manual data enrichment before they enter the matching queue

- Cross-document consistency: checking that the set of data that will be matched, invoice, PO, delivery confirmation, represents a coherent transaction before matching begins

Decision-grade data preparation is the upstream layer that matching software requires but rarely includes. It is not a separate product, it is the data pipeline that transforms raw inputs into inputs reliable enough for automated matching to produce trustworthy results.

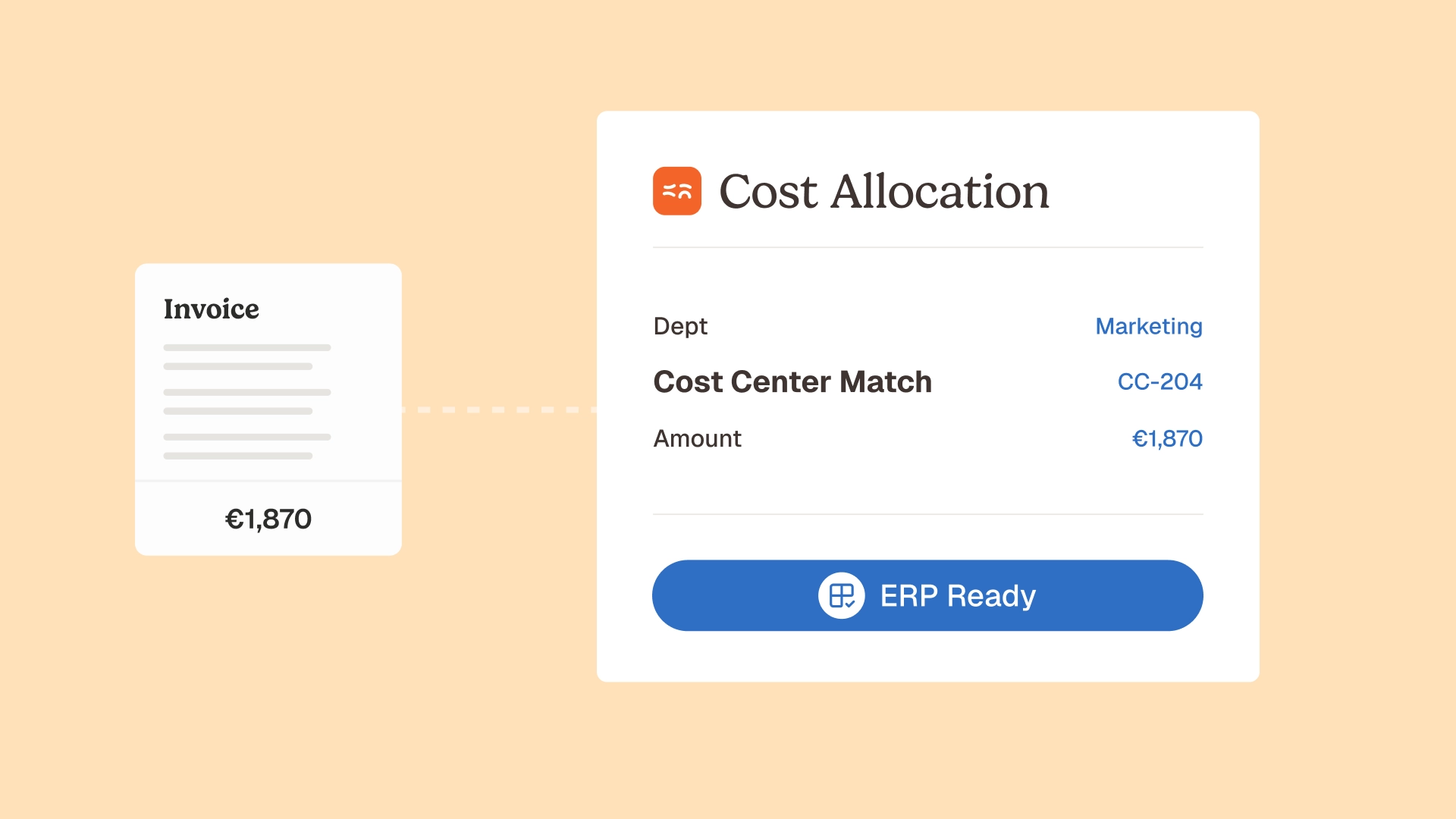

Phacet's accounting inbox agent performs this preparation step on every incoming invoice: extraction with confidence scoring, normalisation across format variations, entity resolution against the supplier master, completeness checking, and routing decisions that separate match-ready invoices from those that need human data enrichment before they can be processed. The agent for extracting payments from PDFs applies the same logic specifically to payment-relevant data fields, ensuring that the amounts, due dates, and bank references that downstream matching and payment processing depend on are structured and validated at the point of extraction.

The right architecture: matching as one step in a validated data pipeline

The practical implication of the data quality root cause is architectural. Invoice matching software should not be treated as a standalone system that receives raw inputs and produces match results. It should be treated as one stage in a validated data pipeline, and the stages upstream of matching are as important as the matching logic itself.

A validated data pipeline for invoice matching has four stages:

Stage 1 — Ingestion and extraction. Invoices arrive through multiple channels, email, supplier portal, EDI, scanned mail. Each is ingested, the relevant fields are extracted with confidence scoring, and format-specific normalisation is applied. Invoices that fail extraction quality thresholds are flagged for human data enrichment before proceeding.

Stage 2 — Entity resolution and reference data alignment. Extracted entity references (supplier names, item codes, location identifiers) are resolved to canonical identifiers using the entity resolution layer. Reference data (contracted prices, payment terms, exchange rate fixing dates) is retrieved for the relevant supplier and applied as the comparison baseline.

Stage 3 — Data matching. The normalised, entity-resolved, reference-data-enriched invoice data is compared against the corresponding PO and delivery confirmation records. Data reconciliation logic applies the matching algorithm with the appropriate tolerance configuration. Non-matches are categorised by type: genuine discrepancy, structural absence, or process gap.

Stage 4 — Exception triage and resolution. Genuine discrepancies and structural absences are routed to the exception-based review workflow with the full data context: which specific field caused the non-match, what the values were on each side, and what action is recommended. Process gaps (missing delivery confirmations) are routed to the relevant operational team to complete the record, not to the AP exception queue.

This architecture is what makes continuous finance control viable at scale, not better matching logic, but a cleaner data pipeline that delivers match-ready inputs consistently.

Phacet's 3-way matching agent operates within this pipeline. It does not receive raw invoice PDFs and produce match results. It receives normalised, entity-resolved, reference-data-enriched invoice data and applies matching logic against equally prepared PO and delivery confirmation records. The exception rate reflects genuine discrepancies, not data quality failures that should have been resolved upstream.

For the broader context of how this pipeline fits into purchase-to-pay workflows, see AI 3-way matching automation and payment traceability and the use case page for 3-way matching, which covers the end-to-end control logic in detail.

Making matching reliable: 3 operational practices

Beyond the architectural changes, three operational practices make invoice matching significantly more reliable at scale.

Practice 1 — Define and enforce invoice submission standards. The most effective intervention in the data quality pipeline is upstream of it: requiring suppliers to submit invoices that include the fields your matching process needs. Mandatory PO number on all invoices. Standardised item reference format. Consistent currency denomination aligned with the contract. Enforcing these standards, through supplier onboarding communication, portal configuration, and rejection of non-compliant invoices, reduces the extraction and entity resolution burden downstream.

Most organisations have these standards documented. Far fewer enforce them systematically. When a supplier submits an invoice without a PO reference and that invoice enters the AP queue as a "no PO match" exception, the correct response is not to resolve the exception manually, it is to return the invoice and require resubmission with the PO number included. Manual exception resolution in this case actively undermines the matching process by creating a workaround that discourages supplier compliance.

Practice 2 — Maintain supplier master data as a controlled asset. Entity resolution quality is directly dependent on supplier master data quality. A supplier master that contains duplicate records, outdated bank details, inconsistent name formats, and missing tax identifiers produces entity resolution failures at every stage of the matching pipeline.

The supplier invoice automation layer that Phacet implements treats supplier master data as an actively maintained reference, not a static ERP table. Changes to supplier records (new bank accounts, updated payment terms, name changes from mergers) are captured, versioned, and applied with the effective date that determines which version of the supplier record applies to a given invoice.

Practice 3 — Separate exception categories and measure them independently. Exception queue management is most effective when exceptions are categorised by root cause rather than treated as a homogeneous backlog. An exception rate of 8% looks very different if 6% of it represents genuine discrepancies (the matching process is working) versus if 6% represents extraction failures and missing PO references (the data pipeline is failing). Measuring and reporting exception rates by category, extraction failure, entity resolution failure, missing reference, genuine discrepancy, provides the operational intelligence needed to improve the right part of the pipeline.

Audit trail infrastructure that captures not just match results but the full resolution path, what data was present, what caused the non-match, who resolved the exception, and how, makes this measurement possible. It also produces the documentation required for audit-ready finance processes: not just evidence that each invoice was matched, but evidence of how each exception was categorised and resolved.

From match rate to control rate: the right success metric

The conventional success metric for invoice matching software is the match rate, the percentage of invoices that are automatically matched without human intervention. This is a useful operational metric, but it is not the right control metric.

An 85% match rate tells you that 85% of invoices were processed automatically. It does not tell you whether the 15% that failed to match were genuine discrepancies, data quality failures, or missing reference data, and it does not tell you whether the 85% that matched were correctly matched, or matched against incorrect reference data that happened to be consistent with the invoice.

The right control metric is the control rate, the percentage of invoices for which the control objective has been achieved. Has this invoice been validated against the correct contracted price? Has the delivery been confirmed against the right purchase order? Has the supplier entity been resolved to the canonical record? Has the exception, where raised, been resolved by a qualified reviewer with the full data context?

This reframe, from match rate to control rate, is the practical expression of pre-decision control applied to invoice processing. The control objective is not that invoices are processed automatically. The control objective is that every invoice is validated correctly before payment is authorised.

The Jinchan Group's experience illustrates the difference: after deploying Phacet's validated data pipeline, their exception volume initially increased, because the pipeline was correctly identifying data quality failures that their previous matching process had been silently passing through. Their match rate measured at the matching stage was temporarily lower. Their control rate, the proportion of invoices validated correctly against the right reference data, was significantly higher. By the end of the first quarter, both metrics had improved as supplier data quality enforcement and reference data maintenance eliminated the upstream failures. See the Jinchan case study for the full trajectory.

For a detailed treatment of what effective invoice control before payment requires, and how the data pipeline interacts with the supplier billing control agent, see the corresponding articles in the Phacet blog. The AI financial data automation and end-to-end transparency article covers the broader data pipeline context for finance operations beyond AP.

FAQ

Why does invoice matching fail at scale when it worked well in the pilot?

Invoice matching pilots typically run on a representative sample of invoice volume, selected to cover the common cases, the main suppliers, the standard formats, the typical transactions. Edge cases, supplier format variations, reference data inconsistencies, missing PO references, are present in the full invoice population but underrepresented in a limited pilot. As volume grows in production, the proportion of invoices that represent these edge cases increases, and the matching engine encounters data quality failures that the pilot never surfaced. The failure mode is not in the matching logic, it is in the data quality of the inputs that the matching logic receives.

What is invoice data normalisation and why does matching require it?

Invoice data normalisation is the process of converting extracted invoice field values into a consistent internal format, standardising decimal separators, date formats, currency codes, unit of measure conventions, and entity identifiers, regardless of how they appear in the source document. Matching requires normalised data because the comparison operation it performs is sensitive to formatting differences that are irrelevant to the underlying values. A quantity of 1,000 extracted as "1,000" from one document and "1.000" from another (due to a decimal separator difference) will fail to match even though the values are identical. Normalisation eliminates this category of false non-match before the matching algorithm runs.

What is entity resolution in the context of invoice matching?

Entity resolution is the process of mapping the identifiers that appear in source documents, supplier names, item references, location codes, to canonical identifiers that are consistent across all connected systems. Without entity resolution, "Acme Corp", "ACME Corporation Ltd", and "Acme Corporation" are treated as different entities by the matching engine, even though all three refer to the same supplier. Entity resolution maintains a mapping table that translates each observed identifier to its canonical equivalent, allowing the matching engine to compare normalised entity references rather than raw strings.

What is the difference between a match rate and a control rate?

A match rate measures the percentage of invoices that are processed automatically without human intervention at the matching stage. A control rate measures the percentage of invoices for which the control objective has been achieved: validated against the correct contracted price, against a confirmed delivery, with the correct supplier entity identified, and with any exceptions resolved correctly. An invoice can contribute to a high match rate while failing the control objective, if it matched against incorrect reference data that happened to be consistent with the invoice. Optimising for match rate can actively undermine control quality; optimising for control rate requires the full data pipeline to be operating correctly.

What should finance teams look for in invoice matching software?

Beyond the matching algorithm itself, finance teams should evaluate how matching software handles the data quality pipeline upstream of matching: extraction confidence scoring, normalisation of format variations, entity resolution across supplier naming conventions, reference data management (how contracted prices and payment terms are stored and applied), and exception categorisation (does the software distinguish between genuine discrepancies, extraction failures, and missing reference data?). Software that treats all non-matches as equivalent exceptions shifts the resolution burden onto the AP team rather than addressing the root causes of non-match.

How does AI improve invoice matching compared to rule-based matching systems?

Rule-based matching systems apply fixed matching rules: if field A from document 1 equals field A from document 2 within tolerance X, the match succeeds. AI-based matching applies learned matching logic that can handle variations the rules didn't anticipate: fuzzy string matching that recognises entity variations without explicit configuration, tolerance models that adapt to the observed error distributions for specific supplier relationships, and anomaly detection that identifies matches that are technically valid but statistically unusual given the supplier's historical billing patterns. The improvement is most significant in the entity resolution and extraction normalisation layers, the upstream data quality infrastructure, where AI models handle the variability that rule libraries cannot fully enumerate.

Reliable data is what makes matching a control, not just a process

Invoice matching at scale is a data infrastructure challenge that manifests as a matching problem. The matching algorithm matters, but it is the last step in a pipeline where every upstream step determines whether the matching algorithm has reliable inputs to work with.

Organisations that have solved invoice matching at scale have done so by investing in the three upstream layers: extraction quality, entity resolution, and reference data management. The matching logic they run on top of those layers is often simpler than what organisations that haven't invested in the pipeline are running, because clean, normalised, entity-resolved inputs require less sophisticated matching to produce correct results.

Phacet's invoice matching architecture is built on this principle. The accounting inbox agent prepares decision-grade data before it reaches the matching engine. The 3-way matching agent applies matching logic against that prepared data and produces exceptions that are genuine discrepancies, not noise from upstream data quality failures. The human-in-the-loop control layer routes those genuine exceptions to qualified reviewers with the full data context, and the audit trail captures the full resolution path for every invoice processed.

The result is matching as a control, not just as a process, where the exception rate reflects the actual error rate in the invoice population, not the failure rate of the data pipeline. Book a demo to see how this architecture applies to your invoice population and what your current exception patterns reveal about where the data quality failures are occurring.

Latest Resources

Unlock your AI potential

Go further with your financial workflows — with AI built around your needs.