Financial data quality: why poor data leads to wrong finance decisions

Published on :

April 6, 2026

The financial errors that get noticed are payment errors. A duplicate invoice paid. A supplier charged at the wrong rate. An expense claim that shouldn't have been reimbursed. These are visible, they appear in reconciliations, surface in audits, show up as variances when the numbers are eventually compared.

The financial errors that don't get noticed are decision errors. A pricing adjustment not made because the margin data was three weeks stale. A cash position overstated by unreconciled receipts, leading a CFO to approve a commitment the company couldn't comfortably afford. An ARR figure presented to the board that included churned contracts still sitting in the billing system. A supplier renegotiation that started from a flawed baseline because the spend data was inconsistently categorised across entities.

These errors never appear in a reconciliation. They leave no exception in a system log. They are simply decisions made with confidence on data that didn't deserve that confidence, and their cost is often far larger than the payment errors that get caught and fixed.

Poor financial data quality is not primarily a reporting problem. It is a decision problem. And solving it requires starting further upstream than most finance functions currently look.

The hidden cost of bad financial data: decisions, not discrepancies

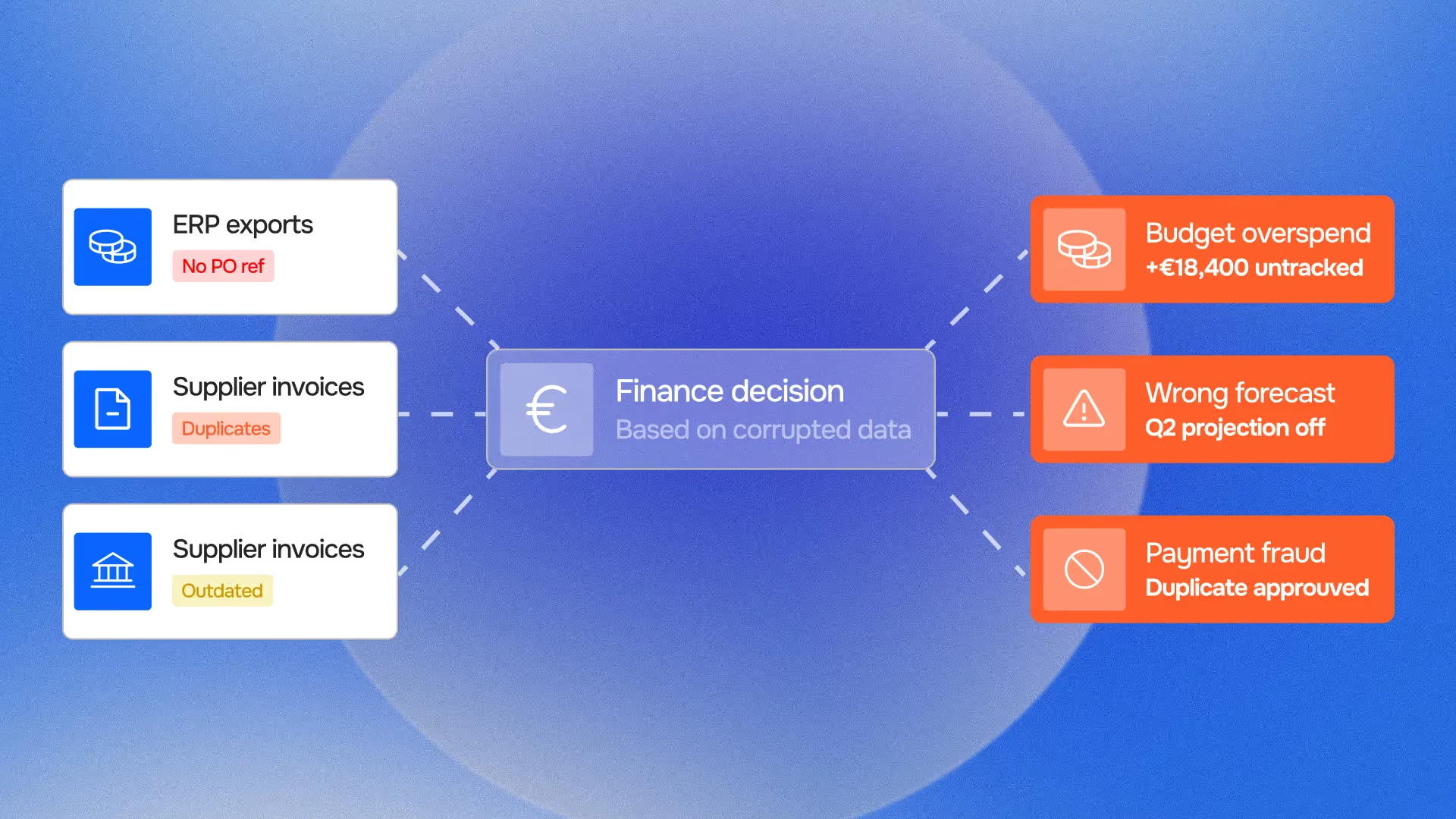

Finance teams measure data quality problems by their operational symptoms: exception rates, reconciliation backlog, close preparation time. These are real costs, but they are not the largest costs of poor financial data quality.

The largest costs are in the decisions made on data that looked reliable but wasn't.

A CFO who trusts an ARR figure that hasn't been reconciled against billing transactions is making capital allocation decisions on an estimate presented as a fact. A purchasing director who approves a supplier contract renewal based on a spend analysis that didn't normalise category labels across entities is negotiating from a position that doesn't accurately represent their actual buying power. A board that reviews a cash flow forecast built on unverified bank balances may approve or reject investments based on a position that differs from reality by days or weeks of unreconciled transactions.

None of these decision-makers are negligent. They are working with the data available to them, presented through the systems and processes that their organisation has built. The problem is not the decision, it is that the data quality infrastructure required to make the decision correctly was not in place.

Data trustworthiness, the property of financial data that allows it to be relied upon for decisions at any point in time, is not a technical characteristic. It is an organisational posture: the sum of all the processes, controls, and validation layers that stand between raw financial data and the decisions it feeds.

When that posture is weak, the consequences appear not in exception queues but in decision outcomes.

5 ways poor financial data quality corrupts decisions

1. Stale data presented as current

Financial data has a validity window. A bank balance is accurate at the moment the bank statement was generated, and becomes progressively less accurate as transactions post, settle, and are recorded with varying timing delays across connected systems. A revenue figure is accurate at the moment the billing cycle closed, and diverges from reality as customer payments arrive, contracts are modified, and recognition events occur.

When stale data is presented as current, without a timestamp, without a reconciliation date, without a flag indicating when it was last validated, the decision-maker has no mechanism to apply appropriate scepticism. The number on the dashboard looks current because the dashboard was refreshed this morning. The underlying data may not have changed since last Wednesday.

Decisions based on stale financial data carry systematic errors in predictable directions: cash positions tend to be overstated (because unreconciled outflows have not yet reduced the balance), receivables tend to be overstated (because the ageing analysis hasn't captured recent payments), and revenue figures tend to lag reality in both directions depending on the timing of the revenue recognition cycle.

The continuous finance control discipline addresses this specifically: by reconciling financial data continuously rather than periodically, the gap between "data as of the dashboard" and "data as of now" is measured in hours rather than days or weeks. But continuous reconciliation is only part of the answer, the data also needs to carry provenance: when it was last validated, against what source, and with what confidence.

2. Inconsistent categorisation across systems or entities

Every organisation that operates across multiple systems, entities, or reporting dimensions faces a categorisation consistency problem. The same supplier may be classified under "logistics" in one entity and "operations" in another. The same cost type may appear as "marketing spend" in the CRM-derived P&L and "business development" in the ERP. The same product line may be tagged with different revenue category labels depending on whether the source is the billing system or the accounting system.

When a CFO or controller aggregates this data for a management report or board pack, they are working with a categorisation layer that appears consistent (the report has clean rows and columns) but is built on underlying inconsistency. The "marketing spend by region" table may be comparing regions that have classified costs differently, producing a comparison that is arithmetically correct but economically meaningless.

The decisions that flow from this analysis, budget reallocations, headcount decisions, pricing adjustments, are based on a comparison that doesn't accurately represent the underlying commercial reality. The error is not in the arithmetic. It is in the false assumption that like-labelled categories actually contain like transactions.

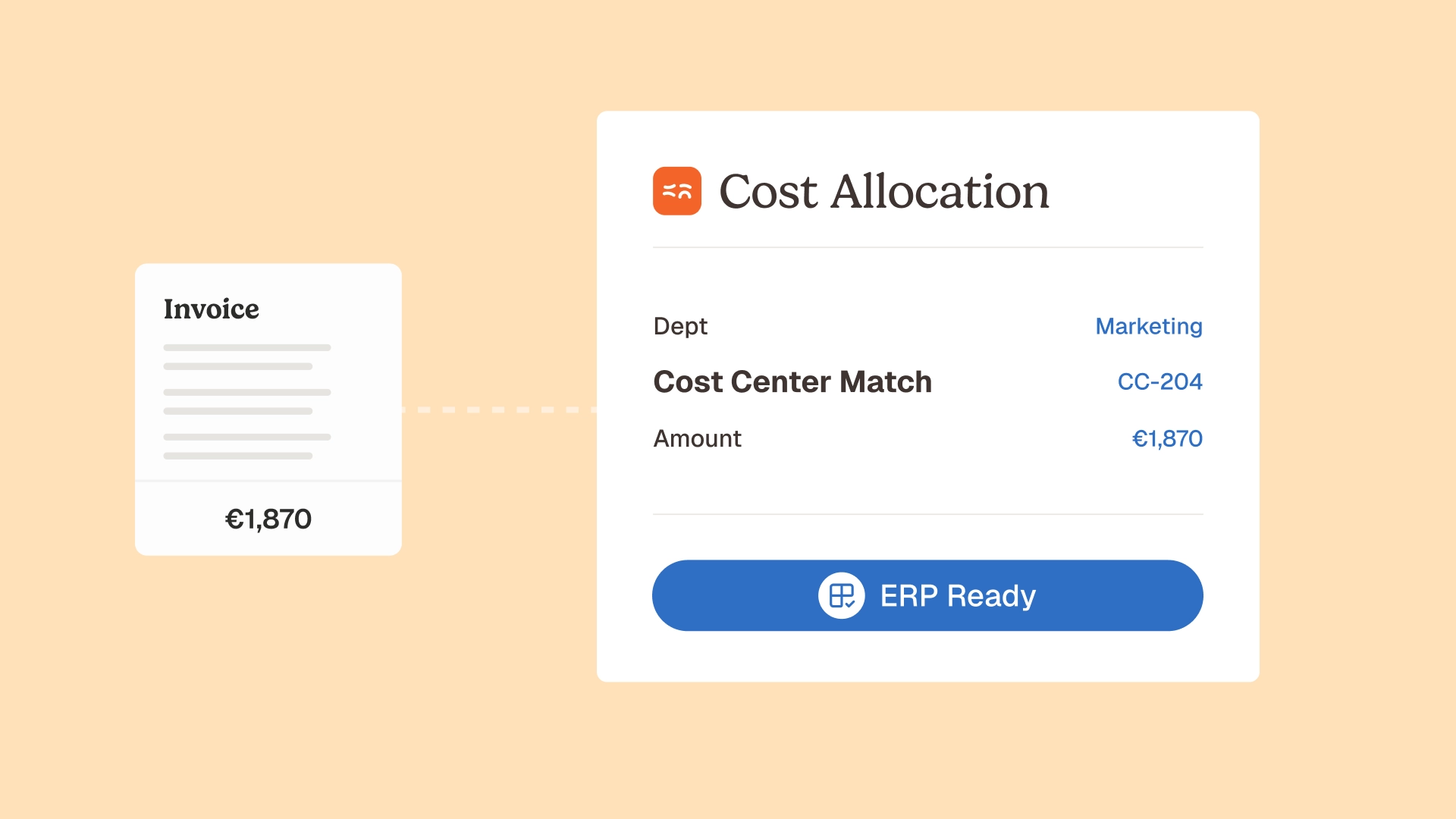

Data normalisation and data labeling applied consistently across all source systems, not just within a single system, is the prerequisite for management reporting that can support cross-entity comparisons. Phacet's agent for standardising and reclassifying accounting data at scale applies this normalisation layer across connected data sources, ensuring that category labels carry consistent economic meaning across the full reporting population.

3. Undetected errors that accumulate into material misstatements

Individual data quality errors are rarely material on their own. A single invoice processed at a price 3% above the contracted rate is not a material financial statement error. But in an organisation processing 2,000 invoices per month from suppliers with complex pricing agreements, a systematic 3% price deviation across a subset of those invoices can represent hundreds of thousands of euros annually, enough to materially affect margin reporting and the management decisions based on it.

The problem is not the existence of individual errors. The problem is the absence of a detection mechanism that identifies when individual errors are part of a systematic pattern. Sampling-based controls, the monthly review of a representative selection of invoices, can catch individual errors but cannot identify systematic patterns unless the sample is designed to surface them.

Pattern-based data quality controls, applied continuously across the full transaction population, can identify systematic deviations that individual transaction reviews would miss: a specific supplier consistently billing above contracted rates, a specific cost category with an anomalous distribution of amounts, a specific entity with a reconciliation rate that diverges from the others. These patterns are data quality signals, they indicate that the underlying data has a systematic quality problem that will produce consistent errors in any decision that relies on it.

The financial risk exposure embedded in undetected systematic errors is the primary reason that financial data quality matters beyond operational efficiency. It is not about catching individual mistakes, it is about ensuring that the data population feeding strategic decisions does not contain systematic biases that will produce consistently wrong outputs.

4. Cross-system inconsistencies mistaken for ground truth

Modern finance functions operate across a stack of systems that each hold a partial version of the financial reality: the ERP holds the accounting record, the CRM holds the commercial pipeline, the billing platform holds the revenue transaction record, the bank portal holds the cash position. Each system is internally consistent. None of them hold the same version of the financial reality.

When a decision-maker asks a question that spans these systems, "what is our current net revenue from customer segment X?", the answer depends on which system is queried. The ERP will give an accounting-based answer based on invoiced and recognised revenue. The CRM will give a pipeline-based answer that includes contracted but not yet billed revenue. The billing system will give a transactional answer based on billed amounts regardless of recognition. The bank portal will give a cash-based answer based on actual receipts.

All four answers are internally correct. None of them are the same number. If the decision-maker doesn't know which system to trust for which question, they will get an answer, but not necessarily the right one for the decision they need to make.

Source of truth validation and data alignment across systems address this by establishing which system is authoritative for each data type and reconciling cross-system values systematically. The decision-grade data preparation layer in Phacet's architecture performs this reconciliation before data reaches any reporting or analytical workflow, ensuring that the number presented for a decision reflects a consistent, cross-system-validated view rather than the output of whichever system was queried first.

5. Missing data that forces false confidence intervals

Financial decisions are made under uncertainty. The experienced finance professional manages this by knowing which parts of the data are solid and which are estimates, which figures are system-validated and which are manually compiled, which numbers carry narrow confidence intervals and which carry wide ones.

This calibration is entirely dependent on data provenance: the ability to trace a number back to its source, the process that produced it, and the controls that validated it. When data provenance is weak, when a number appears in a report without a clear trail to its source, the decision-maker has no mechanism to distinguish high-confidence data from low-confidence data. They must either treat all data as equally reliable (which understates uncertainty for the low-confidence figures) or treat all data as equally uncertain (which overstates uncertainty for the high-confidence figures).

Both errors produce decision quality problems. Treating low-confidence data as reliable produces overconfident decisions. Treating high-confidence data as uncertain produces excessively cautious decisions or decision paralysis.

Explainable decision control, the principle that every data point feeding a financial decision should be traceable to its source, the process that produced it, and the validation applied, is what enables appropriate calibration. It is not just an audit requirement. It is a decision quality requirement: the decision-maker who knows the confidence level of their data makes better decisions than the one who doesn't.

Why financial data quality degrades systematically

Understanding why financial data quality degrades helps identify where to intervene. The causes are structural rather than behavioural, they do not stem from carelessness but from the architecture of modern finance operations.

Multi-system proliferation without synchronisation. Every new system added to the finance technology stack creates a new potential source of inconsistency. Two systems that once held the same data begin to diverge the moment they update independently. Without a systematic synchronisation and reconciliation discipline, this divergence compounds over time, silently, without any error message.

Manual data handling at integration points. Data that moves between systems via manual exports, copy-paste operations, or human re-entry is data that can be modified, corrupted, or lost at every hand-off. Most finance functions have more of these manual integration points than they realise, often created as workarounds for systems that don't integrate natively. Each one is a data quality risk that is invisible until it produces an error.

Categorisation drift over time. Classification schemes that start consistent become inconsistent as new transaction types, new suppliers, and new cost categories are added without updating the underlying classification logic. The "miscellaneous" category grows. The line between adjacent categories blurs. Historical comparisons become unreliable as the same label comes to mean different things in different periods.

Sampling-based controls that miss systematic problems. As covered above, monthly sampling catches individual errors but misses patterns. The systematic quality degradation that produces management decision errors typically manifests as a pattern, a consistent deviation, a drift in one direction, that individual transaction sampling is designed to miss.

The absence of a data quality feedback loop. Most finance functions have processes for catching data quality errors when they cause a visible problem (a reconciliation fails, an audit finding is raised). Very few have processes for measuring data quality proactively, monitoring the accuracy rate of financial data across the full population, tracking the rate at which cross-system values diverge, measuring the age of the data feeding decision workflows. Without this measurement, data quality degradation is invisible until it produces a material error.

The 5 dimensions of financial data quality

Financial data quality is multi-dimensional. Improving it requires addressing all five dimensions, improving one while neglecting the others produces partial results that still leave decision-making exposed.

Accuracy: does the data correctly represent the underlying economic event? An invoice recorded at the wrong amount, a receipt misposted to the wrong account, a FX conversion applied at the wrong rate: all are accuracy failures. Accuracy controls are the most commonly deployed, they are what most AP control and reconciliation processes target. But accuracy alone is not sufficient.

Completeness: does the data include all relevant events? A P&L that doesn't capture all cost centres, a receivables report that misses invoices not yet posted in the billing cycle, a cash flow statement built on bank data that hasn't captured last-day transactions: all are completeness failures. Completeness failures produce understatement errors, the data is accurate for what it shows, but it doesn't show everything.

Consistency: does the data represent the same things in the same way across systems, entities, and time periods? The categorisation consistency problem described above is a consistency failure. So is a metric definition that changes between reporting periods without restating historical figures. Consistency failures produce comparison errors, the data is accurate in isolation but produces misleading conclusions when compared.

Timeliness: does the data reflect the current state of the underlying economic reality? Stale data presented as current is a timeliness failure. So is a reconciliation that runs monthly in a business where transactions settle daily. Data trustworthiness requires that data is not just accurate when validated but remains accurate (or is re-validated) at the point of use.

Traceability: can every data point be traced to its source, the process that produced it, and the controls applied? Traceability is the dimension that enables all the others to be verified. Without it, accuracy, completeness, consistency, and timeliness are asserted rather than demonstrated. The audit trail that Phacet's agents generate for every processed transaction addresses the traceability dimension: every number that reaches a decision workflow carries a provenance record showing exactly how it was produced and validated.

From data quality hygiene to decision-grade infrastructure

Most finance functions have some data quality practices in place. They do month-end reconciliations. They have an AP approval workflow. They run exception reports. These practices address data quality reactively, catching errors after they have entered the data pipeline, and on a sample rather than the full population.

The shift from data quality hygiene to decision-grade data infrastructure requires three changes:

From reactive to preventive. Data quality controls applied before a transaction enters the financial record are more effective than controls applied after, both because they prevent the error from propagating through downstream systems and because the context for correction is still available. Pre-payment controls and pre-decision control layers are preventive by design. Our recent analysis of continuous finance control covers the architectural shift from periodic to continuous control in detail.

From sample to population. Controls applied to a sample of transactions leave systematic quality problems undetected. AI-powered controls applied to every transaction surface patterns that sampling misses, the consistent supplier pricing deviation, the categorisation drift, the cross-system divergence that is individually small but cumulatively material. The exception-based finance review model makes this population-level control operationally viable: every transaction is checked, genuine exceptions are routed for human review, and the finance team's attention is concentrated on the exceptions that actually require it.

From system-level to cross-system. Data quality controls applied within a single system don't address the cross-system inconsistencies that produce the most damaging decision errors. Financial reconciliation across systems, comparing the ERP view against the billing platform view against the bank view against the CRM view, is the control that catches the errors that individual system controls miss. Phacet's reconciliation agents apply this cross-system logic continuously, producing a unified, validated data view that carries consistent provenance across all connected sources. The data reconciliation finance article covers the specific practices in detail.

FAQ

What is financial data quality and why does it matter for decisions?

Financial data quality is the degree to which financial data accurately, completely, consistently, and traceably represents the underlying economic reality it is supposed to capture. It matters for decisions because every financial decision, payment approval, budget allocation, pricing adjustment, investment commitment, is implicitly a bet that the data it is based on is reliable. When that data is inaccurate, incomplete, inconsistently categorised, stale, or untraceable, the decision carries a systematic error that is invisible to the decision-maker. The cost of poor financial data quality is not primarily the errors it introduces into reports, it is the errors it introduces into decisions.

What is the difference between financial data quality and financial reporting accuracy?

Financial reporting accuracy is about whether published financial statements correctly represent the company's financial position. Financial data quality is broader: it encompasses the accuracy, completeness, consistency, timeliness, and traceability of all the data that feeds financial decisions, including operational decisions made during the period, not just the periodic financial statements. A company can have technically accurate financial statements (because the errors cancel out or fall below materiality thresholds) while having poor financial data quality that systematically corrupts the mid-period decisions that drive the business.

What are the most common causes of poor financial data quality in finance functions?

The most common structural causes are: multi-system proliferation without systematic synchronisation (each new system creates a new divergence point), manual data handling at integration points (exports, re-entry, copy-paste operations that introduce errors and lose provenance), categorisation drift over time (classification logic that becomes inconsistent as new transaction types are added without updating the underlying scheme), sampling-based controls that miss systematic patterns, and the absence of proactive data quality measurement (quality problems are only visible when they cause an error, not as they develop).

How does poor financial data quality affect the closing process?

Poor financial data quality directly extends the closing cycle. The reconciling items that accumulate when cross-system data quality is weak become the primary workload of the close: matching unreconciled bank transactions to ERP records, resolving invoice discrepancies, clearing categorisation inconsistencies that prevent automated posting. Finance functions with strong continuous data quality controls consistently report 40-60% reductions in close preparation time, not because the close process changed, but because the data quality problems that used to accumulate during the period are resolved continuously rather than deferred to the close.

What is decision-grade data and how is it different from clean data?

Clean data is data with no errors, accurate, complete, and correctly formatted. Decision-grade data is data that is clean, but also carries the provenance, timeliness validation, and cross-system consistency checks that allow a decision-maker to rely on it without additional verification. A database with clean records but no timestamps, no reconciliation history, and no traceability to source documents is clean but not decision-grade. Decision-grade data can be trusted at the point of use because it carries the evidence of the controls that validated it, not just the absence of detectable errors.

How do AI agents improve financial data quality compared to manual processes?

Manual data quality processes are bounded by bandwidth, a team can check a sample of transactions, maintain consistency within a single system, and respond to errors after they are reported. AI agents improve on this in three ways: they process every transaction rather than a sample, they apply consistent validation logic without the variation that comes from different analysts applying different judgment, and they detect patterns across the full data population that sample-based reviews would miss. The intelligent data extraction agents that Phacet deploys also apply normalisation and entity resolution at the point of ingestion, preventing the format and classification inconsistencies that degrade data quality before they enter the financial record.

Where should a finance function start when addressing data quality problems?Start with the data that feeds the highest-stakes decisions, not the largest volume of transactions. For most organisations, this means: the revenue and ARR data feeding investor reporting (because errors here have the highest external consequence), the supplier invoice and payment data feeding cash and margin management (because errors here have the most direct financial impact), and the cross-entity cost data feeding resource allocation decisions (because errors here produce the most consistently wrong management decisions). For each domain, the first step is measurement: what is the current cross-system reconciliation rate, what is the error rate in the full transaction population (not the sample), and what is the age of the data at the point it feeds decision workflows?

Bad data doesn't announce itself before a decision is made

The fundamental challenge of poor financial data quality is its invisibility. A payment error announces itself, eventually, in a reconciliation or an audit or a supplier dispute. A decision error made on bad data rarely announces itself at all. The hiring decision that should have been deferred because cash was tighter than the dashboard showed. The margin target that was set too generously because the supplier price deviation data wasn't captured. The acquisition that was priced at a premium to an ARR figure that hadn't been reconciled.

These decisions don't fail spectacularly. They simply don't achieve the outcomes that better data would have enabled, and the causal link between the data quality problem and the decision outcome is never established because nobody is looking for it.

The finance function that solves financial data quality is not just solving an operational problem. It is solving a decision quality problem, across every part of the organisation that uses financial data to make decisions, which is every part of the organisation.

Phacet's data quality infrastructure applies the five dimensions, accuracy, completeness, consistency, timeliness, and traceability, across the full transaction population, continuously, with cross-system validation and a complete provenance record for every data point. The accounting inbox agent, bank reconciliation agent, supplier billing control agent, and cash flow labelling agent each contribute to this infrastructure, covering the data domains where decision errors carry the highest financial cost. Book a demo to see how Phacet's data quality layer applies to your financial environment and what your current data reveals about where the decision risk is concentrated.

Latest Resources

Unlock your AI potential

Go further with your financial workflows — with AI built around your needs.