How can finance teams validate extracted invoice data before ERP entry?

Published on :

May 4, 2026

A finance team running modern OCR with 95% field-level accuracy still posts hundreds of corrupt records into the ERP every quarter. The 5% gap is not the issue: the issue is that extraction accuracy was treated as if it were validation. The two are different jobs, and entering data into an ERP is yet a third.

When those three are collapsed into one pipeline, errors that should have been caught at the validation layer flow downstream and surface during month-end close, vendor reconciliation, or a VAT audit. By then, the cost of fixing them is ten times higher than the cost of catching them at the source.

This article maps the validation stack that should run between extraction and ERP entry. Seven layers, in firing order: field-level format validation, internal consistency math, confidence-score thresholds, vendor master cross-reference, transactional context (PO, contract, GRN), standardization (GL coding, VAT allocation), and audit trail capture. Run them all on every invoice and ERP entry becomes a non-event. Skip a layer and the cost migrates from the AP queue to the close timeline.

Why extraction accuracy is not validation

Top extraction tools advertise 95% to 99% field-level accuracy. That metric is real but it doesn't mean the document is ERP-ready. A field can be extracted correctly and still fail validation: the supplier name matches a near-look-alike in the master, the VAT amount doesn't reconcile with the rate × subtotal, the invoice date is in the future, the IBAN is one digit off the one on file.

Extraction accuracy answers "did the model read the value off the page correctly?" Validation answers "is this value internally consistent, contextually correct, and policy-compliant?" Those are different questions and they fail in different ways.

The shift the article proposes: stop measuring extraction quality alone. Start measuring first-pass ERP-ready yield, the percentage of invoices that pass all seven validation layers and post straight through. That metric tells you how much human work the pipeline actually saves.

The 7 validation layers between extraction and ERP entry

1. Field-level format validation

The first check confirms each extracted field obeys its expected format. Invoice number is a string of the right pattern, invoice date is a valid date in the past, amounts are decimals with the right currency precision, VAT identifiers match the country's format. A field that fails format is a return to the extractor (re-extract or human review), not a routing decision.

This layer is foundational because every later check assumes typed, normalized data. A date stored as a string breaks the future-date check, an amount stored without currency precision breaks the math check.

2. Internal consistency (math validation)

The second layer treats the invoice as a self-consistent document. Three checks anchor it: line items sum to the subtotal, the subtotal × VAT rate equals the tax line, the subtotal plus tax equals the grand total. If any of those fail, something was misread or something was off on the source document itself, and either way the invoice cannot post cleanly to the GL.

Math validation also catches the long tail of extraction edge cases: a column shifted by one, a comma read as a period, two line items collapsed into one. None of those would show up on a field-level accuracy benchmark, all of them break ERP entry.

3. Confidence scoring and human-in-the-loop thresholds

Modern extraction returns a confidence score per field. A robust validation pipeline turns those scores into routing decisions. Above 95% confidence, the field auto-clears. Between 80% and 95%, a reviewer scans the flagged fields against the source document. Below 80%, the entire invoice goes to manual review.

Confidence scoring is the spine of the human-in-the-loop architecture. Without it, every invoice gets the same treatment, which means either over-investing reviewer time on clean documents or under-investing on the ambiguous ones. With it, reviewers see only what the model is uncertain about, with the source document and the extracted value side by side.

4. Vendor master cross-reference

Once the invoice is internally consistent and confident, the next check pushes it against the vendor master. Does this supplier exist in the master file? Is the entity name an exact match or a near-look-alike? Does the IBAN, SIRET, or VAT number match the master record?

This is where a clean and enriched supplier database earns its keep. Cross-reference fails are the strongest fraud signal: a new supplier that wasn't onboarded, a modified IBAN, a slight name variation. None of these are extraction errors, all of them must block ERP entry.

5. Transactional context (PO, contract, GRN)

For PO-backed spend, the validation must compare extracted invoice data to the purchase order, the goods receipt note, and the contract. Three-way matching at this stage isn't just an approval gate, it's a data-quality gate: the invoice references a PO that exists, the line items match the PO at the price and quantity agreed, the receipt confirms delivery. The full mechanics live in our guide on three-way matching automation.

For non-PO spend (services, recurring contracts), the equivalent check is contract-based: the invoiced rate matches the contract, the billing period is within the contract term, the invoiced services map to a contractual line.

This layer is where most extraction-validation pipelines stop short. They validate the invoice as a standalone document but never validate it against the transactional context that makes the spend legitimate.

6. Standardization (GL coding, VAT allocation, currency, units)

The sixth layer turns a validated invoice into a posting-ready record. GL accounts must be assigned (or proposed for review), VAT must be allocated to the right tax codes, foreign currency invoices must be converted at the right rate, units of measure must be normalized.

This is the layer that takes "data extracted from a PDF" and turns it into "an entry the ERP will accept." A standardization agent runs the GL coding from supplier history, line-item description, cost-center context, and accounting policy, and exposes its reasoning so a controller can validate the call. The standardize and reclassify accounting data agent handles this transformation at scale, with the same audit logic as every other agent in the stack.

Skipping this layer is the most common reason ERP entries get rejected or post to the wrong account: the invoice was validated as a document but never transformed into an accounting entry.

7. Audit trail capture of every validation decision

The final layer is meta: every check that fired, every threshold that triggered, every override that was applied must be timestamped and stored. The validation decisions themselves need to be inspectable, not just the validation outcomes.

This matters for three reasons. Auditors want evidence that controls ran, not just that they exist on paper. Reviewers need a paper trail when a supplier disputes a coding decision three months later. And the validation pipeline needs telemetry to improve: which fields fail most often, which suppliers generate the most exceptions, which thresholds are too strict or too loose. A native audit trail is the only way to answer those questions retrospectively.

Why most pipelines skip layers in practice

Every finance leader nods at this list. Few pipelines actually run all seven layers. Three reasons:

Tools own one or two layers, never the full stack. OCR vendors stop at extraction. ERP modules pick up at posting. The validation work between them lives in spreadsheets, email threads, and the controller's head. The result: layers 2, 3, 5, and 7 are partially or fully manual.

Confidence scoring is rarely surfaced. Extraction tools generate scores internally but few expose them to the AP team in a way that drives routing. Without that, every invoice gets the same review pattern (a quick eyeball check) regardless of how much the model already trusted the output.

Standardization is treated as a downstream finance task. GL coding gets done after the invoice has been "approved" for payment, which means a posting-ready record is built once approval has already been given. That's backwards: the coding should be part of validation, not part of posting.

This is why pre-ERP invoice validation needs to run as a pipeline, not as a sequence of disconnected tools. Each layer feeds the next. Each layer's failures should be observable.

How AI agents structure the validation pipeline

Rule-based AP automation handled layers 1 and 2 reasonably well: format checks, simple math validation, exact-match cross-reference. It struggled on the semantic layers: a vendor name that is "Acme Logistics SARL" on the invoice and "ACME LOGISTICS" in the master, a line description that drifts between deliveries, a GL code that depends on context the rule engine can't see.

AI agents close that gap. The Phacet pattern decomposes the seven layers across specialized agents that share the same architecture:

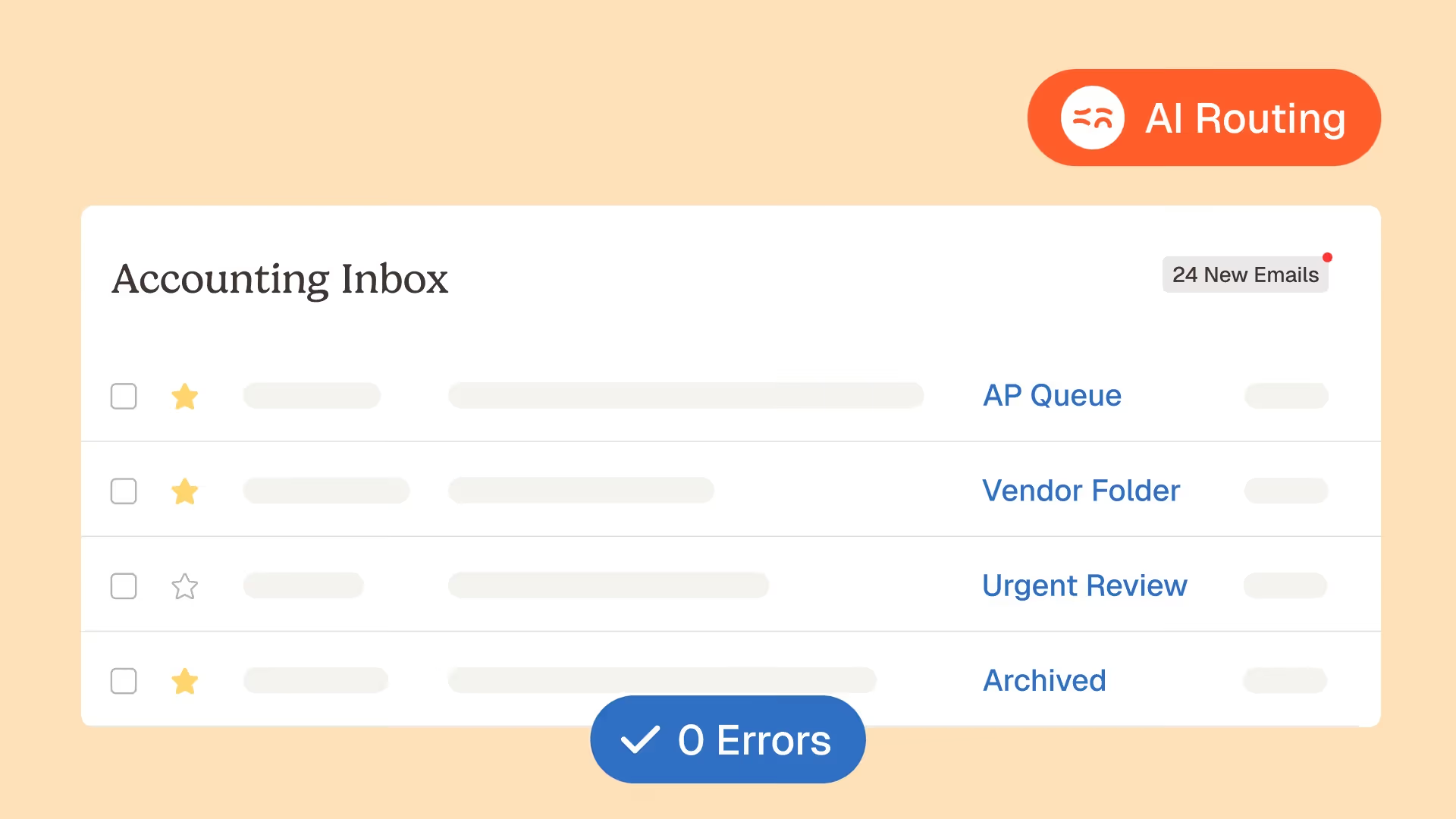

The accounting inbox agent handles intake: extraction, field-level format validation, internal consistency math, and confidence scoring per field. Each invoice arrives in a Tables view with the source document, the extracted fields, and the confidence indicator on each field, ready for layer 4 onward.

The supplier database enrichment agent maintains the master that layer 4 cross-references. It detects duplicates, surfaces near-look-alikes, flags missing tax IDs, and keeps the IBAN history for change-detection.

The 3-way matching agent covers layer 5, performing semantic matching between invoice, PO, and goods receipt with justification at every step. When line descriptions don't match exactly, the agent reasons through the variance instead of dropping the invoice into an exception queue.

The ERP/CRM billing consistency agent verifies that the validated record is internally consistent with the ERP's expected schema before posting: GL accounts exist, VAT codes are valid, cost centers are open. This is the last gate before the data crosses into the ERP.

The standardization and reclassification agent handles layer 6, applying the GL coding, VAT allocation, and unit normalization that makes the record posting-ready.

Each agent follows the same three-step pattern: it structures the input (extracts and normalizes the data), controls against a reference (master, PO, contract, accounting policy), then exposes its reasoning with a confidence score. Every step is timestamped in the audit trail, which means layer 7 isn't a separate task: it's a native byproduct of how the agents operate.

The output of this pipeline is straight-through processing for the clean majority and exception routing for the residual minority, with the human reviewer seeing exactly what the model wasn't confident about, with the source document and the extracted value side by side in the Vue Détail.

What 100% layer coverage looks like in production

Three customer outcomes show what changes when all seven validation layers run on every invoice:

The French Bastards (14 boutiques) absorbed the doubling of their location count without adding finance headcount. Invoices flow from email to ERP-ready records without manual data entry, and exceptions are routed only when a layer flags them. As their Head of Finance described it: "All invoices arrived in one inbox. We were opening each email, taking the attachments, and putting them into accounting." That extraction-to-ERP loop is now agent-driven.

Astotel (18 hotels) recovered roughly 5,000€ per year on a single supplier by surfacing variance at the validation stage rather than discovering it post-payment. The Head of Purchasing puts it directly: "I catch errors I would never have spotted on my own." That's what a layer-5 control returns when it runs on every line of every invoice.

Jinchan gained 10 to 15 minutes per day per site director, and detected anomalies at five times the previous rate. The mechanism: extraction fed validation fed posting in a single pipeline, with confidence scoring and exception routing replacing the daily mailbox triage.

Across all three: the validation stack didn't make the data better, it made the data trusted. ERP entry stopped being a place where errors surface and started being a place where validated records land.

FAQ

What is the difference between invoice data extraction and invoice data validation?

Extraction is the process of reading values off an invoice (PDF, image, e-invoice) and turning them into structured fields. Validation is the process of checking that those fields are internally consistent, contextually correct, and policy-compliant. A field can be extracted correctly and still fail validation. See more in our glossary entry on invoice data extraction.

Why is validation needed before ERP entry rather than after?

Errors caught after ERP posting cost roughly ten times more to fix than errors caught before posting. After-the-fact corrections require reversing journal entries, reconciling vendor statements, restating reports, and sometimes amending VAT declarations. Pre-ERP validation catches the same errors when they're still fields in a Tables view, before any of those downstream effects.

What is a confidence score in invoice extraction?

A confidence score is a per-field probability that the extraction model assigns to its own output, ranging from 0 to 1 (or 0% to 100%). It tells the validation pipeline how much to trust each extracted value. High-confidence fields auto-clear, mid-confidence fields trigger reviewer attention, low-confidence fields force a manual check against the source document. The score is what makes exception-based finance review workable at scale.

How do AI agents differ from OCR for validation?

OCR converts an image into text. AI agents can read a document, validate it against rules, cross-reference it against a master file, reason about variances, propose a GL code, and explain their reasoning. The difference matters because the validation layers between extraction and ERP entry require semantic judgment (matching, reasoning, contextual coding), which OCR alone cannot provide.

What goes into the audit trail for validation?

A complete validation audit trail records: which checks ran on each invoice, the result of each check (pass, fail, override), the confidence score per field, any human review or override (who, when, why), the source document version, and the final ERP-ready record. With this trail, an auditor can reconstruct any posting decision after the fact, and a controller can answer supplier disputes without reopening the source document.

Build the pipeline, then plug the ERP

The question "how can finance teams validate extracted invoice data before ERP entry?" sits between two assumptions that both fail in production: that extraction is good enough, and that the ERP is the validation layer. Neither holds at volume.

The answer is a seven-layer pipeline that runs between the source document and the ERP, treating extraction, validation, and posting as three jobs that need three different controls. Run all seven layers on every invoice and the ERP becomes the simple destination it was meant to be: a system of record, not a system of corrections.

Phacet customers typically deploy the accounting inbox agent first (layers 1 to 3), the supplier database agent and 3-way matching agent next (layers 4 and 5), and the standardization agent to close the loop on layer 6. The first agent is in production in under two weeks, with the audit trail (layer 7) live from day one because every Phacet agent runs natively on it.

Extraction was the easy part. Validation is where the trust gets built.

Latest Resources

Unlock your AI potential

Go further with your financial workflows — with AI built around your needs.